In this article

- 1 What Changed in 2026

- 2 What Is an n8n AI Agent?

- 3 n8n AI Agent vs Regular Workflow Automation: When to Use Which

- 4 The Ship Lean Agent Pattern

- 5 The 2026 Build Checklist

- 6 What You Need Before Building

- 7 Step 1: Pick One Decision

- 8 Step 2: Trigger and Input

- 9 Step 3: Add the AI Agent Node

- 10 Step 4: Attach Tools and Structured Output

- 11 Step 5: Wire the Decision to Action

- 12 Step 6: Test on Real Data, Not Your Imagination

- 13 Step 7: Add the Boring Reliability

- 14 What My Real n8n Workspace Shows

- 15 What I Got Wrong Early

- 16 Common Mistakes That Keep Your Agent Dumb

- 17 Where to Go From Here

Quick answer: An n8n AI agent is the AI Agent node plus tools (HTTP, database, code, APIs) that lets an LLM read context, call those tools, and pick the next step on its own. Without tools, it is just a chatbot in a workflow. The Ship Lean pattern: Claude/Codex builds, n8n runs, human approves.

If you’re trying to figure out whether you even need an agent, start with what an n8n AI agent is and n8n AI agent vs workflow automation. Short version: agents are for judgment calls, not every automation.

If you want the whole path in one place, start with the n8n AI Agents hub. It links the definition, workflow pattern, builder tool, and Claude Code handoff.

This tutorial answers the search cluster Google is already testing:

| Query | Direct answer |

|---|---|

n8n ai agent | Use the AI Agent node only when the workflow needs judgment. |

n8n ai agent workflow | Trigger in n8n, let the model make one scoped decision, route the result, then approve risky output. |

n8n agentic workflow | The agentic part is tool use plus structured decisions, not just an LLM prompt. |

n8n ai agent node | The node is the reasoning step; n8n still owns triggers, credentials, routing, retries, and run history. |

what is n8n ai agent | It is an LLM-powered workflow step that can use tools and return a decision inside automation. |

I built my first “agent” in n8n and felt very smart for about ten minutes.

Then I realized I’d just made a fancy ChatGPT call. Input went in. Output came out. Nothing decided. Nothing checked. No tools.

That’s the gap nobody flags in the tutorials: dropping the AI Agent node into a workflow doesn’t make it agentic. It makes it an LLM with a trigger.

This post is the version I wish I’d had when I started: what an n8n AI agent actually is, when to use one instead of a normal workflow, and the pattern I use now that keeps me out of multi-agent spaghetti.

What Changed in 2026

n8n is no longer just “Zapier, but flexible.” It is moving toward a durable AI workflow layer: agent nodes, tools, memory, structured output, retries, credentials, and run history in one canvas.

That matters because the winning pattern is not “let the model do everything.” The winning pattern is:

| Layer | Best owner | Why |

|---|---|---|

| Prompt, schema, tool design | Claude Code or Codex | Repo context, writing, code, and judgment |

| Trigger, credentials, retries | n8n | Durable workflow operations |

| Fuzzy decision | AI Agent node | Reads context and chooses a tool or answer |

| Public/customer action | Human approval | Keeps trust where it belongs |

As of this refresh, n8n’s AI Agent node is a versioned node with current support for tools and output parsers. n8n’s own Tools Agent docs describe the agent as the piece that can choose external tools and return a standard output format. That is the part solo builders should care about: not “AI magic,” but repeatable decisions with visible runs.

Use current language when you build:

- AI Agent node for the reasoning step

- Tools for API/database/app actions

- Structured Output Parser when downstream nodes need clean fields

- Memory only when the task needs prior conversation or prior user state

- Retries and run history for boring reliability

If you only remember one thing, remember this: n8n is the runner, not the whole brain. The AI Agent node should own one fuzzy decision. Everything before and after that should be boring workflow automation.

What Is an n8n AI Agent?

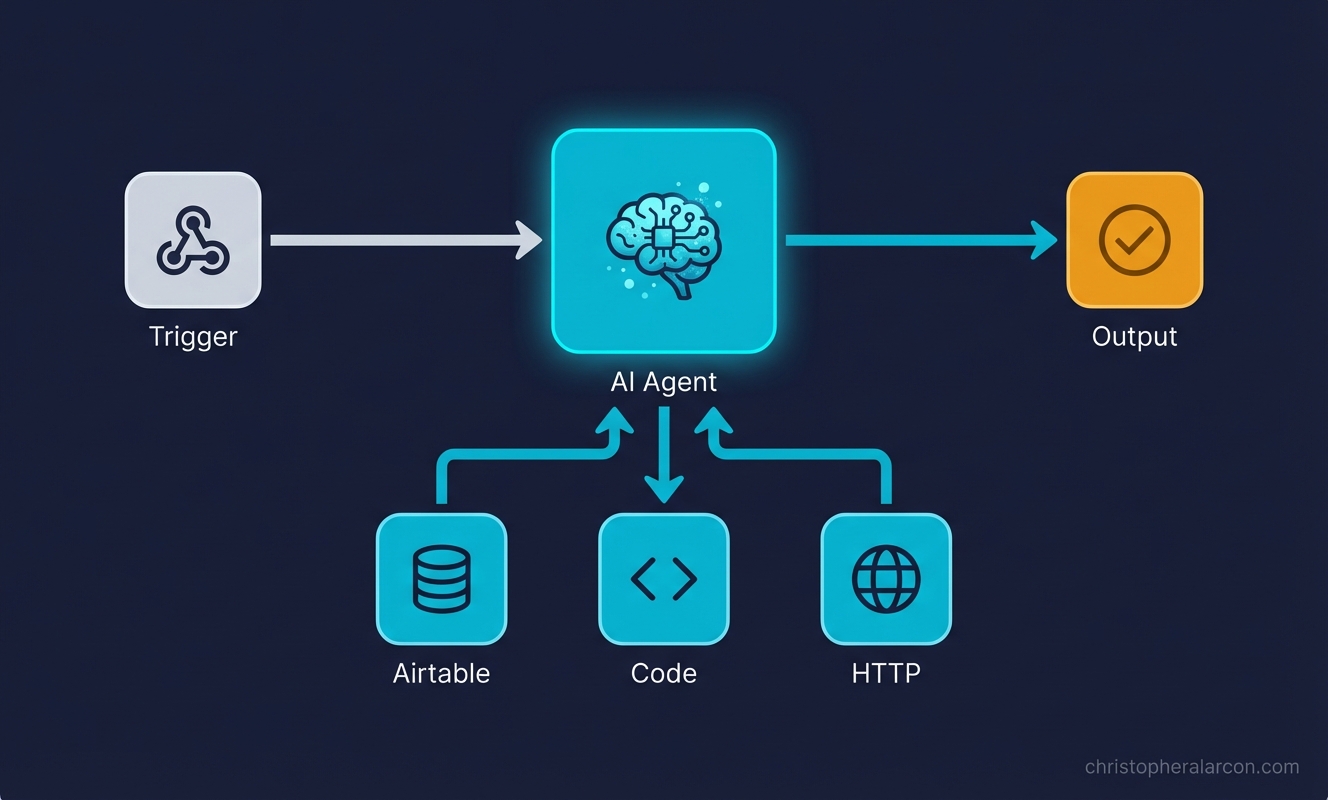

An n8n AI agent is a workflow built around the AI Agent node with tools attached: usually HTTP Request, a database, Airtable, code, or other n8n nodes. That lets the LLM do three things in a loop:

- Read the input and current context

- Decide whether to call a tool (and which one)

- Use the tool’s output to pick the next action or final answer

The “agentic” part is the loop. The model isn’t just generating text. It’s choosing actions based on what it finds.

Without tools, the AI Agent node is a fancy LLM call. With tools, it can look things up, write to a database, hit an API, and reason about the result before answering.

For AEO purposes, this is the clean definition:

An n8n AI agent is a workflow where the AI Agent node can use tools, memory, and structured output to make a judgment step inside a larger automation.

n8n AI Agent vs Regular Workflow Automation: When to Use Which

I default to plain workflow automation. Agents are the exception, not the rule.

| Situation | Use a regular workflow | Use an AI agent |

|---|---|---|

| Inputs are predictable (form fields, structured webhook) | ✅ | |

| Logic fits a clean if-then tree | ✅ | |

| You need messy text classified or summarized | ✅ | |

| You need it to look something up before deciding | ✅ | |

| Output has to be structured every time, no surprises | ✅ | |

| Edge cases keep slipping through your filters | ✅ | |

| Cost per run matters and volume is high | ✅ |

Rule of thumb I use:

If I can write the rules in 10 minutes, it’s a workflow. If I’d need 50 if-statements and still miss cases, it’s an agent.

A workflow that classifies email tone with keyword matching will miss “I’ve been waiting three weeks and this is getting ridiculous.” An agent reads it and routes it correctly. That’s the kind of decision worth paying tokens for.

If the decision is “did the Stripe webhook fire? then send the receipt,” don’t put an LLM in the path.

For a deeper split, read n8n AI agent vs workflow automation. If the question is whether Codex, Claude Code, or n8n should own the work, use AI coding agent vs workflow automation.

The Ship Lean Agent Pattern

Here’s the layout I use now. It’s not clever. That’s the point.

1. n8n handles the trigger and routing. Webhook, RSS, schedule, Airtable change: n8n is good at this. Don’t make the LLM do it.

2. The LLM handles judgment. This is the AI Agent node (or a Claude Code call via HTTP). It reads context, calls tools, returns a structured decision. One agent, one job.

3. Tools are scoped tight. Read-only when possible. Pre-filtered queries, not “here’s the whole database.” Every tool is a surface area you have to trust.

4. A human approves anything that ships. Sends an email to a customer, charges a card, posts to a public account, deploys code: that goes to a Slack/Telegram approval step before it executes. The agent drafts; you click yes.

5. Claude Code does the building, n8n does the running. I draft prompts, tool definitions, and workflow logic in Claude Code or Codex. n8n runs the workflow on a schedule. GitHub holds the workflow JSON. Vercel hosts anything customer-facing. Each tool does what it’s good at.

That’s the whole stack. No swarm of sub-agents. No “AI orchestrator” picking other agents. One agent, scoped tools, human in the loop where it matters.

The 2026 Build Checklist

Before you touch the n8n canvas, write these five things down:

| Decision | Good answer |

|---|---|

| Agent job | ”Score this Search Console query as BUILD, REFRESH, or IGNORE.” |

| Input | Query, URL, impressions, clicks, position, current page summary |

| Tools | Read page content, inspect sitemap, write row to task table |

| Output | JSON with decision, reason, priority, next_action |

| Approval | Human approves new public pages and page refreshes |

If you cannot fill in that table, the workflow is not ready. You do not have an agent problem yet. You have a scope problem.

What You Need Before Building

- An n8n instance. I self-host on Hostinger so I’m not paying per execution.

- An API key. I use Claude Sonnet for most agent work because the structured output behaves.

- A clear, single decision you want automated

- Airtable or a database if your agent needs memory

If n8n is new to you, run through the n8n tutorial for beginners first.

Use a manual trigger while you’re building. You’ll run the thing 30+ times tweaking prompts, and you don’t want an RSS feed or webhook firing each time.

Step 1: Pick One Decision

Every agent needs one job. Not three. One.

Bad: “Read my inbox, write replies, schedule meetings, and update the CRM.”

Good: “For each new RSS post, decide if it’s worth sharing with my list. Output SHARE or SKIP and a one-line reason.”

The narrower the scope, the easier it is to prompt, test, and trust. If you can’t describe the agent’s job in one sentence, the agent isn’t ready to be built.

Step 2: Trigger and Input

For the example, we’ll keep using the content filter: an RSS feed pulls new posts, each post becomes input.

The trigger’s job is to give the agent enough context to make the call: title, link, full text, source. If your input is thin, the agent’s decisions will be thin too.

Step 3: Add the AI Agent Node

Drop in the AI Agent node. Connect the trigger.

Configure:

- Provider/model: Claude Sonnet is my default for judgment work

- System prompt: define the job, the criteria, and the output format

- Output parser: use structured output when another node needs reliable fields

- Memory: add it only if the workflow needs prior conversation or prior user state

Example system prompt:

You are a content relevance filter for a newsletter aimed at solo AI builders

who use Claude Code, n8n, and ship products on the side.

For each post, decide:

- Relevance: High / Medium / Low (does it help this audience build or ship?)

- Quality: High / Medium / Low (is it specific and actionable, or generic?)

- Decision: SHARE or SKIP

- Reason: one line, plain language

Default to SKIP when uncertain. We'd rather miss a marginal post than share a weak one.This alone is not an agent yet. It’s an LLM with a prompt. It reads, it answers, that’s it.

The next step is what changes that.

Step 4: Attach Tools and Structured Output

Tools are how the agent does things instead of just saying things.

In n8n, common tool options:

- HTTP Request: call any API

- Database / Airtable / Postgres: look up or write history

- Code: custom logic when needed

- Other n8n nodes: wrapped as tools

For the content filter, attach an Airtable tool pointing at a “Shared Posts” table. Update the prompt:

Before deciding, use the Airtable tool to check the "Shared Posts" table for

posts shared in the last 30 days. If a similar topic was already covered,

lean toward SKIP unless this post is meaningfully better or newer.Now the agent isn’t analyzing a post in a vacuum. It’s checking history, comparing, and using that to decide. That’s the loop.

You don’t need n8n’s sub-agent feature for this. I almost never reach for it. One agent + a few tools handles most things I’ve thrown at it.

When the next node expects clean data, do not make it parse paragraphs. Require structured output:

{

"decision": "SHARE",

"reason": "Specific walkthrough for solo AI builders.",

"confidence": 0.82,

"approval_required": true

}This is the difference between a demo and a workflow you can run every week.

Step 5: Wire the Decision to Action

The agent returns something like:

Decision: SHARE

Reason: Concrete walkthrough of building a Claude Code subagent. Fits the audience.Downstream, you don’t need a 12-branch if-then. You need one router checking Decision === "SHARE". The complexity lives in the agent’s reasoning, not in the canvas.

For anything that goes out the door, like a tweet, an email, or a published post, route it to a human approval step. A Slack message with Approve/Reject buttons works fine. The agent drafts. You ship.

If you are building this for Ship Lean-style traffic work, the approval step matters even more. New pages, refreshed titles, comparison claims, and public recommendations should not publish automatically. The workflow should prepare the draft and evidence. A human should approve the point of view.

Step 6: Test on Real Data, Not Your Imagination

Your first version will be wrong. That’s fine. Plan for it.

What I run into most:

- Vague prompts: agent makes inconsistent calls because the criteria are fuzzy

- Tool not actually wired: agent “tries” the tool but the connection is broken

- Output drifts: sometimes structured, sometimes prose

- Real inputs are messier than your test inputs

Fix loop is always: tighten the prompt, add an example or two of correct output, narrow the tool’s scope.

Step 7: Add the Boring Reliability

This is where n8n earns its keep.

For any workflow you plan to keep:

- Log every run somewhere boring: a Sheet, Airtable table, Postgres row, or Notion database.

- Save the input, decision, model, cost estimate, and approval result.

- Add retries where the failure is likely temporary.

- Alert yourself when the workflow fails or the output parser breaks.

- Keep credentials in n8n, not pasted into prompts.

AI builders love the agent part. Operators love the run history. Organic traffic comes from writing about the version that actually survives contact with real inputs.

What My Real n8n Workspace Shows

When I checked my own n8n workspace, the pattern was obvious: lots of experiments, one production workflow doing a clear job.

The active workflow is not a mystical multi-agent swarm. It is a content scheduling runner:

- A Notion trigger starts the run.

- n8n grabs the page, length, and assets.

- A filter, code step, and switch route the item.

- Blotato nodes send the asset to YouTube, Instagram, X, TikTok, and LinkedIn.

- n8n updates the status back in Notion.

That is the lesson. The workflows that survive are not always the flashiest ones. They are the ones with a narrow trigger, clear routing, visible status, and boring handoffs.

Most of the other workflows in my account are paused experiments: idea engines, social research, lead routing, newsletter systems, job prep, payment reminders, and old tests. That is normal. n8n becomes more valuable when you label the experiments, retire the stale ones, and keep production workflows boring enough to trust.

The best public example from that inventory is not the active content scheduler. It is the lead qualification pattern.

The private workflow has the shape that actually teaches the idea:

- A webhook receives a lead.

- n8n enriches the lead data.

- An AI step qualifies the lead.

- A structured parser turns the model response into fields.

- n8n routes the result into hot lead, nurture, Slack, and email paths.

That is the useful proof: the model makes one judgment call, then n8n routes the outcome. For a public template, I would not publish the private workflow raw. I would publish the cleaned pattern instead, with fake sample data and no credentials.

You can download that starter pattern here: n8n human approval workflow JSON. I also published the proof asset on GitHub: n8n AI lead qualification workflow with human approval.

What I Got Wrong Early

My first n8n agent system was a faceless YouTube pipeline: Reddit scrape to script to 11Labs voiceover to Creatomate render. Took me a couple weeks. Had four agents where one would’ve done.

It worked. The output wasn’t great, but it ran. The lesson wasn’t “agents are powerful.” It was: I built before I validated, and I overcomplicated every step.

The rewrite was always the same: collapse to one agent, scope its tools, put a human at the publish step.

That’s the version I’d build today, and it’s the version above.

Common Mistakes That Keep Your Agent Dumb

1. Using the AI Agent node with no tools. You built a chatbot. Tools = autonomy. No tools = no decisions worth calling agentic.

2. Multi-agent setups before you need them. Sub-agents and agent loops exist. Skip them until a single agent has clearly hit its ceiling. It usually hasn’t.

3. Vague system prompts. “Make good decisions” isn’t a prompt. Spell out criteria, output format, and what to do when uncertain.

4. No human approval on outbound actions. The first time an agent emails a customer something weird, you’ll wish you had this. Add it before you need it.

5. Testing only on data you wrote. Real inputs break things synthetic ones don’t. Test on actual feeds, actual emails, actual rows.

6. Adding memory because it sounds advanced. Memory is useful for ongoing conversations. It is usually unnecessary for one-shot scoring, routing, enrichment, and drafting workflows. Start stateless, then add memory only when the missing context is actually hurting results.

7. Treating structured output as optional. If n8n needs to route the result, make the agent return fields. Prose is for humans. JSON is for the next node.

Where to Go From Here

Pick one decision you make repeatedly that’s annoying because it requires reading something: inbox triage, lead scoring, content filtering, support routing.

Build that. One agent, one tool, one decision. Run it manually for a week. Watch where it gets confused. Tighten the prompt.

Once that’s working, the second one takes half the time. The third feels normal.

For more patterns, see 7 n8n workflow examples, what an n8n AI agent is, n8n AI agent vs workflow automation, n8n vs Make for AI agent workflows, and Codex vs n8n if you’re still deciding which side of the line your use case sits on.

The AI Agent node is a building block, not the whole building. Tools are what turn it into something that decides. Keep the rest of the stack boring: n8n for plumbing, Claude Code for judgment, GitHub and Vercel for everything that ships. Then you can spend your time on the decisions, not the wiring.

Written by

Chris AlarconChris Alarcon builds Ship Lean: practical AI systems for solo builders who need their product work to turn into distribution and revenue. He shares the exact Claude Code, n8n, content, and workflow systems he uses in public.

Work With Me

Turn proof into distribution

If the article exposed a bottleneck, I can help map the system, build the assets, and route it into tools, workflows, AEO content, newsletters, and short-form distribution.

See the offer →Want more workflows like this?

Every Saturday I send one automation workflow you can steal. Real systems, not theory. Join builders turning product work into distribution.

Get Saturday's Workflow →